Defending against adversarial attacks by randomized diversification

Code: PyTorch

If you have questions about our PyTorch code, please contact us.

The research was supported by the SNF project No. 200021_182063.

Abstract

The vulnerability of machine learning systems to adversarial attacks questions their usage in many applications. We propose a randomized diversification as a defense strategy. We introduce a multi-channel architecture in a gray-box scenario, which assumes that the architecture of the classifier and the training data set are known to the attacker. The attacker does not only have access to a secret key and to the internal states of the system at the test time. The defender processes an input in multiple channels. Each channel introduces its own randomization in a special transform domain based on a secret key shared between the training and testing stages. Such a transform based randomization with a shared key preserves the gradients in key-defined sub-spaces for the defender but it prevents gradient back propagation and the creation of various bypass systems for the attacker. An additional benefit of multi-channel randomization is the aggregation that fuses soft-outputs from all channels, thus increasing the reliability of the final score. The sharing of a secret key creates an information advantage to the defender. Experimental evaluation demonstrates an increased robustness of the proposed method to a number of known state-of-the-art attacks.

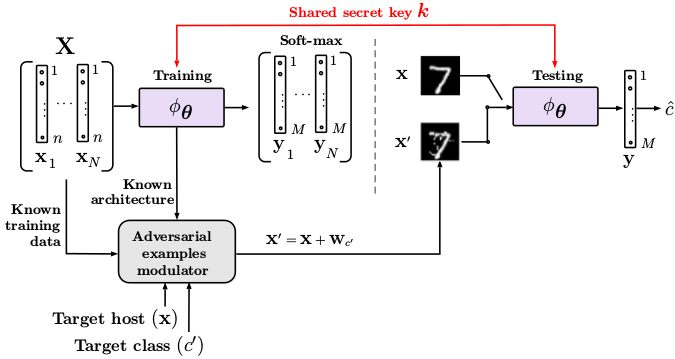

Fig.1: Setup under investigation: the attacker knows the labeled training data set X and the system architecture but he does not have access to secret key k of the defender shared between the training and testing.

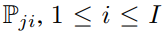

Multi-channel classification algorithm

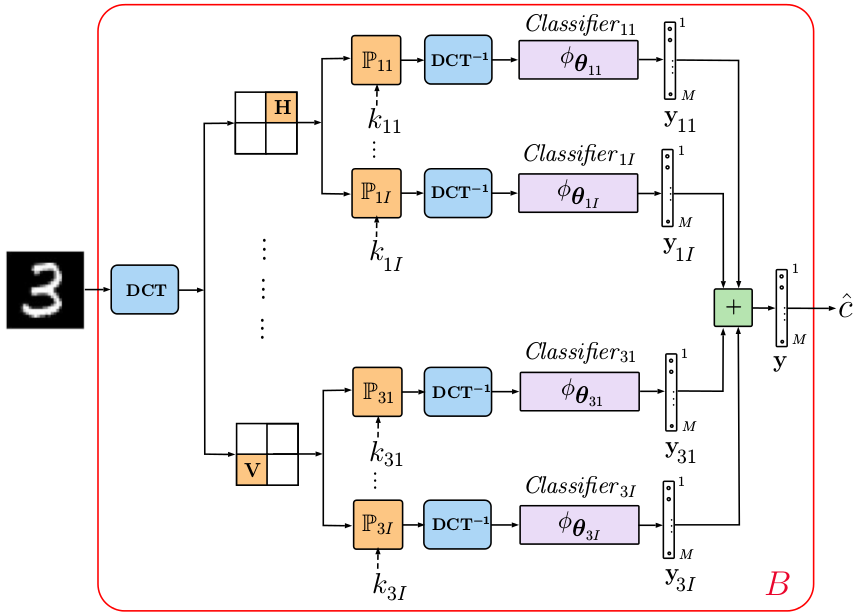

A multi-channel classifier shown in Fig. 2 forms the core of the proposed architecture and consists of four main building blocks:

- Pre-processing of the input data in a transform domain via a mapping

- Data independent processing

serves as a defense against gradient back propagation to the direct domain.

serves as a defense against gradient back propagation to the direct domain. - Classification block can be represented by any family of classifiers.

- Aggregation block can be represented by any operation ranging from a simple summation to learnable operators adapted to the data or to a particular adversarial attack.

The chain of the first 3 blocks can be organized in a parallel multi-channel structure that is followed by one or several aggregation blocks. The final decision about the class is made based on the aggregated result. The rejection option can be also naturally envisioned.

Fig. 2: Generalized diagram of the proposed multi-channel classifier.

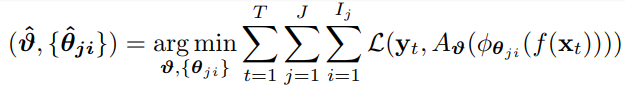

The training of the described algorithm can be represented as:

with

![]()

where ![]() is a classification loss,

is a classification loss, ![]() is a vectorized class label of the sample

is a vectorized class label of the sample ![]() ,

, ![]() corresponds to the aggregation operator with parameters

corresponds to the aggregation operator with parameters ![]() ,

, ![]() is the ith classifier of the jth channel,

is the ith classifier of the jth channel, ![]() denotes the parameters of the classifier,

denotes the parameters of the classifier, ![]() equals to the number of training samples,

equals to the number of training samples, ![]() is the total number of channels and

is the total number of channels and ![]() equals to the number of classifiers per channel

equals to the number of classifiers per channel

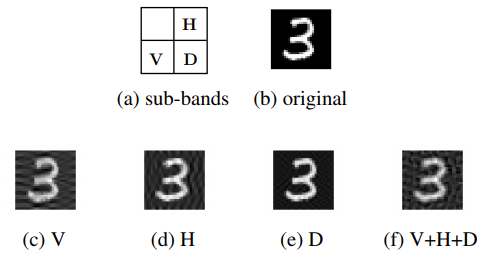

Classification with multi-channel local sign permutation in the DCT domain

The generalized diagram of the used multi-channel architecture with the local sign permutation in the DCT domain is illustrated in Fig. 3. The general idea of the local sign permutation consists in the fact that the DCT domain can be split into overlapping or non-overlapping sub-bands of different sizes. For the simplicity and interpretability, we split the DCT domain into 4 sub-bands, namely, (1) top left that represents the low frequencies of the image, (2) vertical, (3) horizontal and (4) diagonal sub-bands as shown in Fig. 4. The DCT sign flipping is applied as a secret key-based randomization in each sub-band keeping all other sub-bands unchanged.

Fig. 4: Local randomization in the DCT sub-bands by key-based sign flipping.

Fig. 3: Classification with local DCT sign permutations.

Conclusions

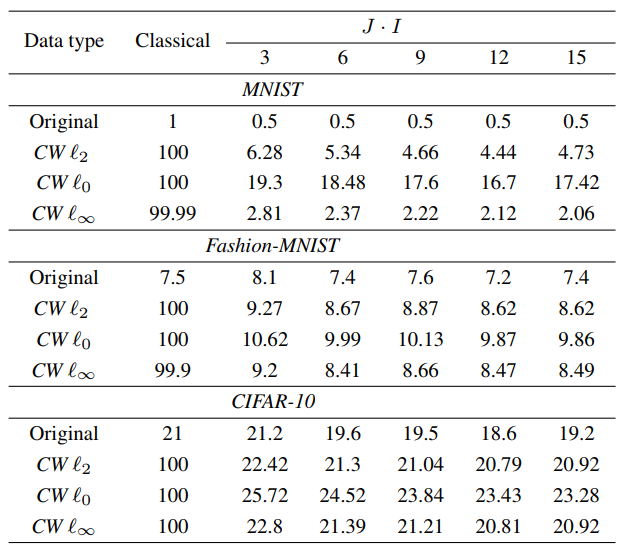

In our paper, we address a problem of protection against adversarial attacks in classification systems. We propose the randomized diversification mechanism as a defense strategy in the multi-channel architecture with the aggregation of classifiers’ scores. The randomized diversification is a secret key-based randomization in a defined domain. The goal of this randomization is to prevent the gradient back propagation or use of bypass systems by the attacker. We evaluate the efficiency of the proposed defense and the performance of several variations of a new architecture on three standard data sets against a number of known state-of-the art attacks. The numerical results demonstrate the robustness of the proposed defense mechanism against adversarial attacks and show that using the multi-channel architecture with the following aggregation stabilizes the results and increases the classification accuracy.

For the future work we aim at investigating the proposed defense strategy against the gradient based sparse attacks and non-gradient based attacks.